Why build the box

Serious external-aero CFD does not fit on a single laptop, and the work turns over often enough that renting cloud cycles every iteration is the wrong shape. The point was to own the compute: more cores than a laptop, turnaround that supports daily iteration, runnable from anywhere, with real visibility into what the cluster is doing.

What it is

A local multi-machine compute setup wired for parallel CFD and numerical workloads. MPI ties the nodes together. The day-to-day workflow is:

- queue a case from a laptop or remote session,

- the cluster runs it under MPI,

- residuals, force histories, and surface fields stream back during the run rather than at the end,

- monitoring catches a stalled run before the wall clock does.

Workloads it runs

The cluster is solver-agnostic; what runs on it is whatever the work needs.

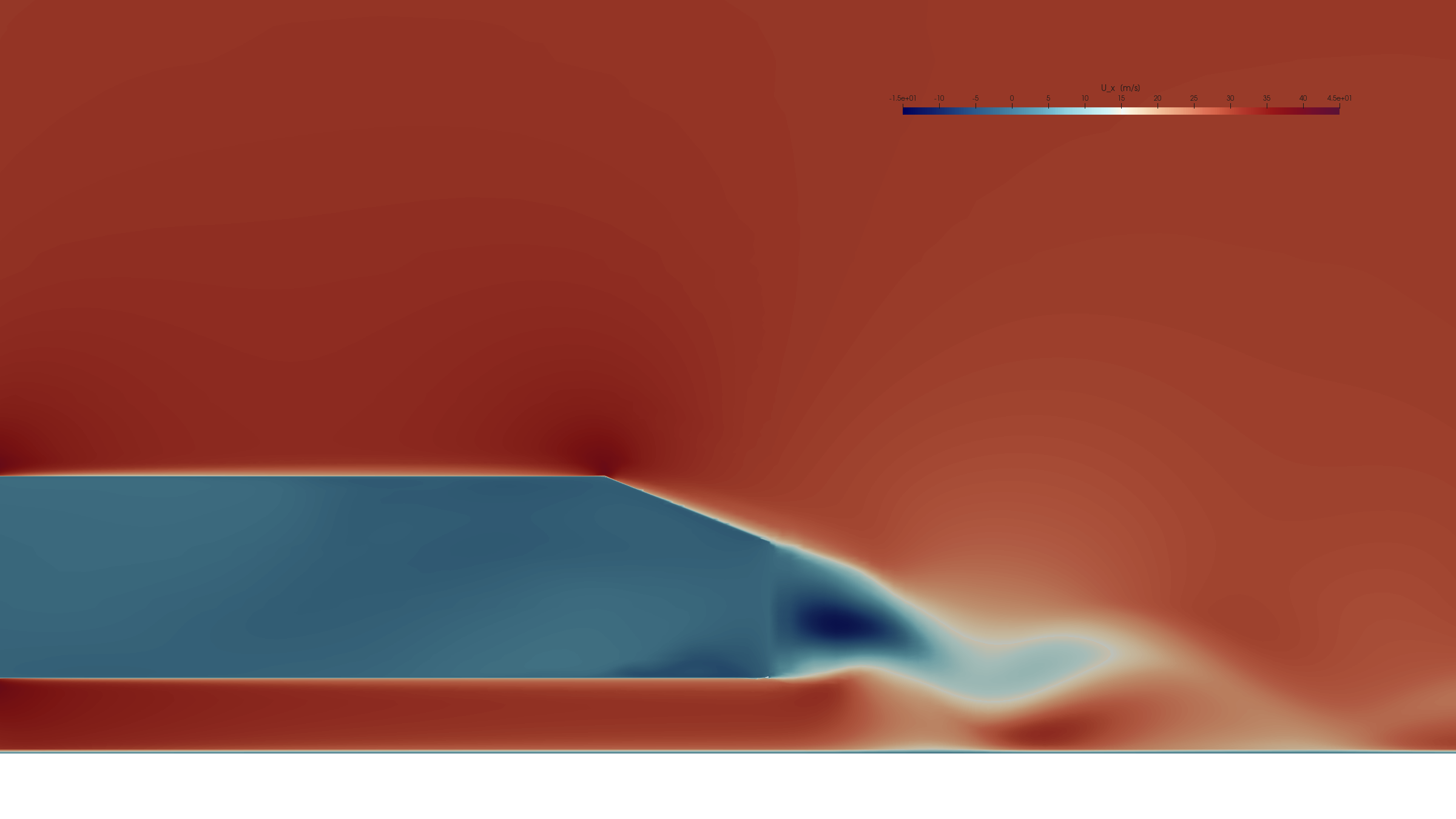

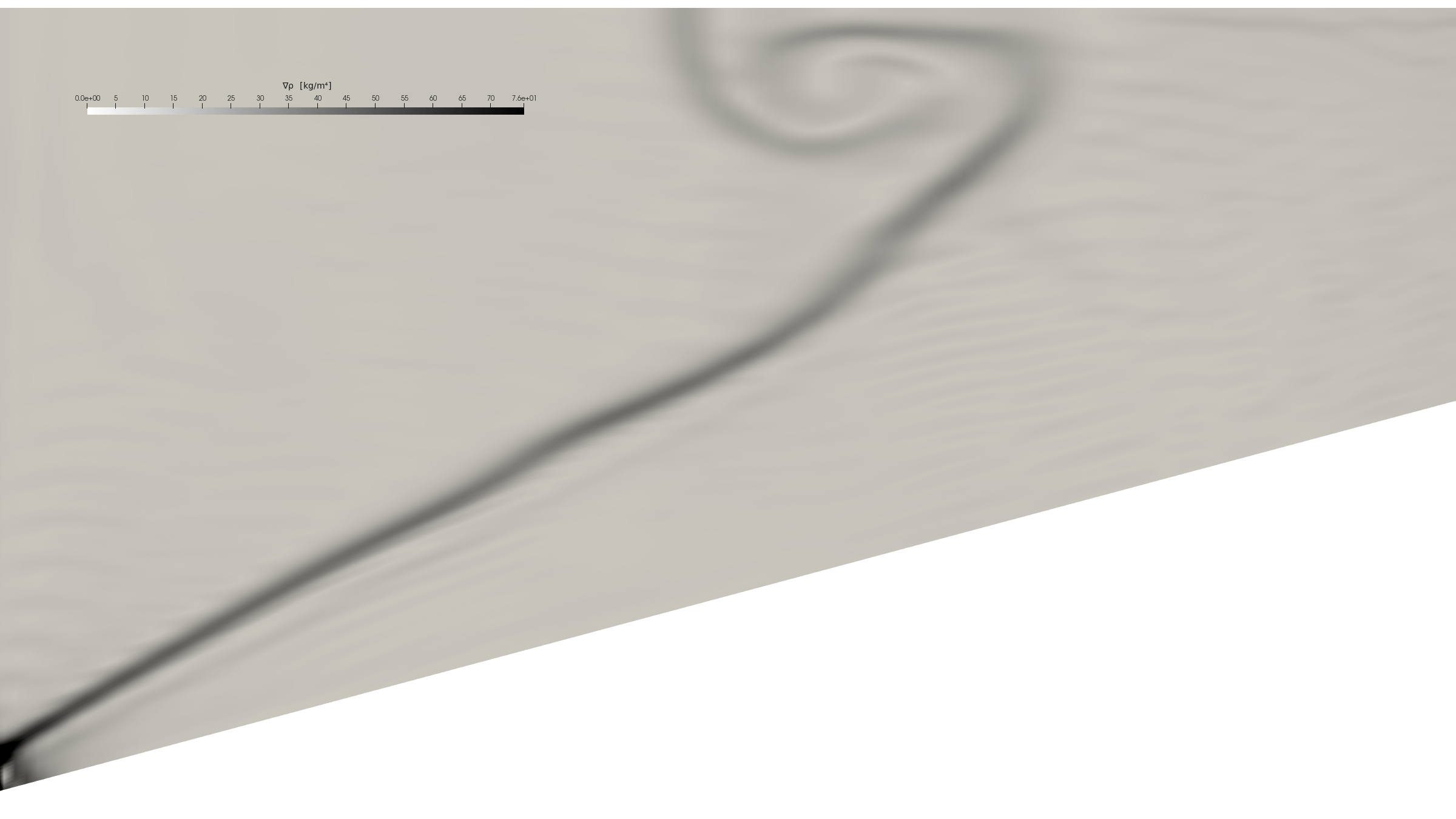

- OpenFOAM — the aeroAUTO Ahmed-body workflow (templated cases, parallel solve, automated post). The figures on the aeroAUTO page come from runs on this cluster.

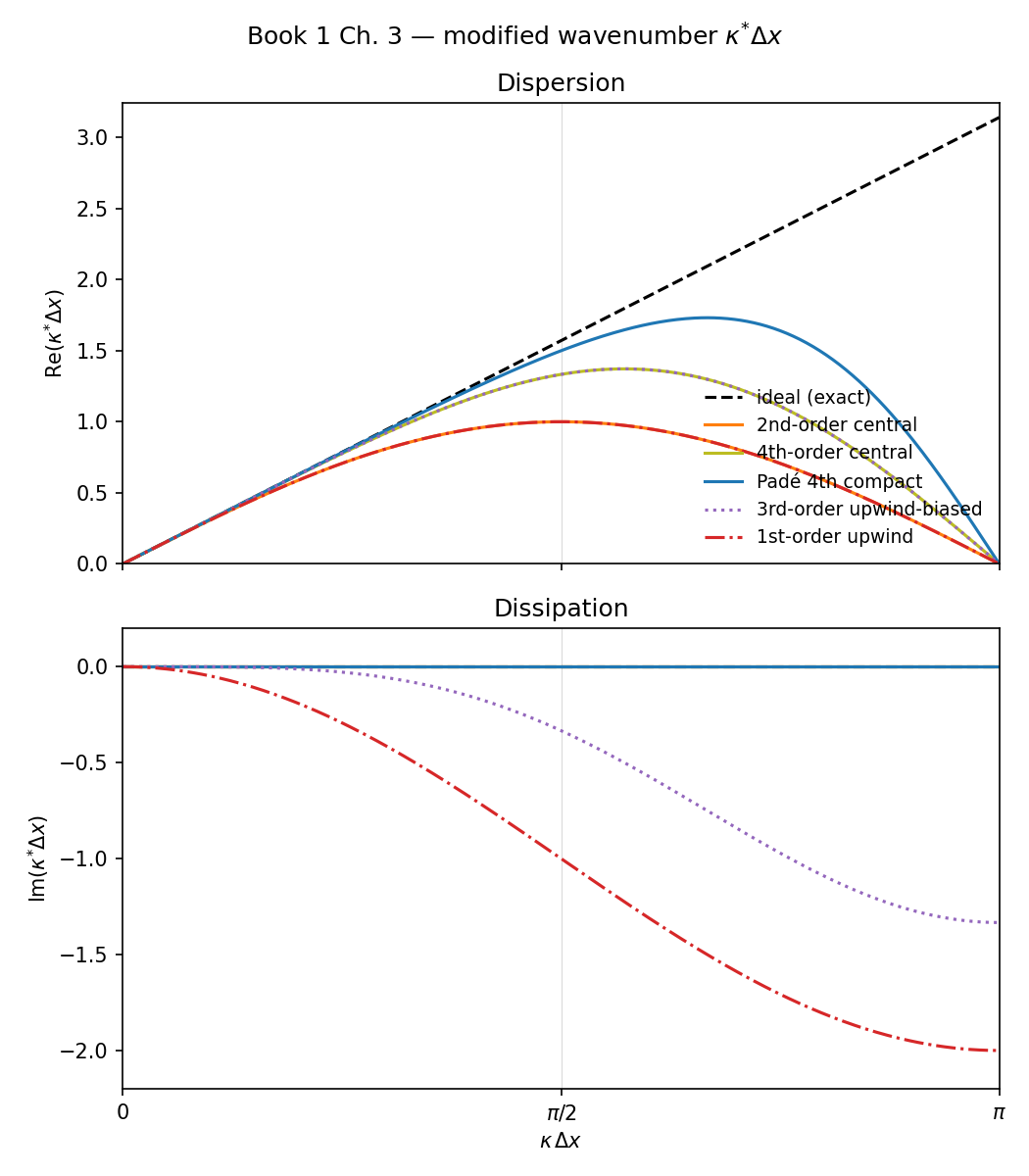

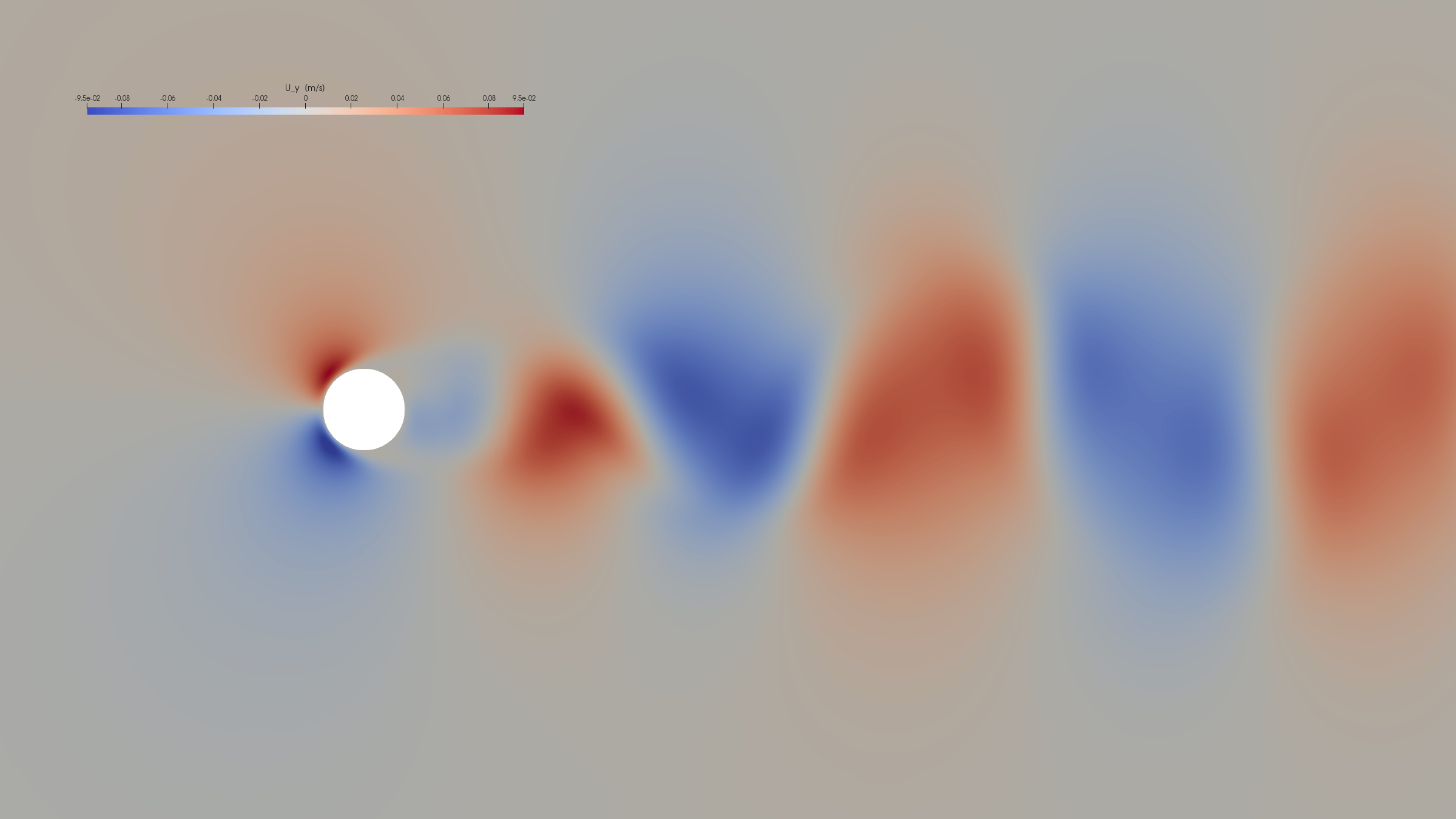

- Nektar++ — high-order spectral/hp runs, including the cylinder-2D scaling work used to characterise per-node throughput.

- Other one-off numerical experiments where parallelism or just more cores beat waiting on a laptop.

What it earns

The thing the cluster pays back is iteration discipline. Running cases locally — and watching them run — surfaces the issues that a black-box rented run would hide: a residual that ticks up at restart, a y⁺ that drifts after remeshing, a node load that says one rank is doing all the work. The point of the rack is not the FLOPs; it is the visibility.